YEREVAN, Armenia — The Power of

Decentralization: Promise and Peril. This is the theme that kept

busy global IT leaders at the 23rd [1]World Congress on Information

Technology[2] (WCIT 2019)

hosted by Armenia in its capital city, Yerevan.

How information and communications technology (ICT) is

transforming our lives, and how the industry is preparing for the

radical change that Artificial Intelligence[3]

is bringing to all sectors took a predominant role in the

discussion.

For Narayana Murthy[4], Founder, Chairman

Emeritus of Infosys[5], the thought of machines

rising in the future represents “a blessing for the

prepared minds and a curse for

the unprepared ones.”

During his keynote speech at WCIT, Murthy said

that “technology has the power to make life more comfortable for

human beings, as long as it is put into good use.” Speaking about

the benefits of adopting autonomous vehicles, Murthy said that

94 percent of accidents are caused by human error.

“Autonomous cars will reduce accidents, reducing deaths caused by

car accidents.”

Murthy, Founder, Chairman Emeritus of Infosys speaks at WCIT in

Yerevan/Source: Susan Fourtané for Interesting Engineering

Rise of the machines: The price of creating power

Technology has always the power to make life more comfortable for

human beings as long as it is put into good use. -Narayana Murthy,

Founder of Infosys

Big Data, Artificial Intelligence[6]

(AI), and Machine Learning[7] (ML) offer the

promise of unrevealed insight and efficiency; robotics, the promise

of freedom from physically dangerous or taxing manual labor, all in

ways never before imaginable.

However, at what price? The widespread deployment of

increasingly sophisticated Big Data, AI, and automated robotic

systems threatens to make entire categories of workers redundant by

automation.

Big Data and AI systems also threaten to distort the human decision-making process[8], subordinating the role

of human judgment.

And paramount questions arise; should the cold logic of hard

data be the master of human systems? What room will remain for

judgment, morality, and human compassion? How much authority and

decision-making are humans willing to cede to machines?

Where and when will it be necessary to draw the ethical and

practical line in the application of Big Data and AI in areas such

as medicine, where compassion and morality ought to reign over

clinical statistics?

How do we avoid being ruled by Big Data, or automated systems?

How do we control AI systems, already so complex that no single

person can understand them, and keep them from going rogue and

turning on us? These are some of the questions everyone involved in

the creation of AI and all those concerned about technology going

wrong should ponder. The topic was deeply discussed by experts on

the subject at WCIT.

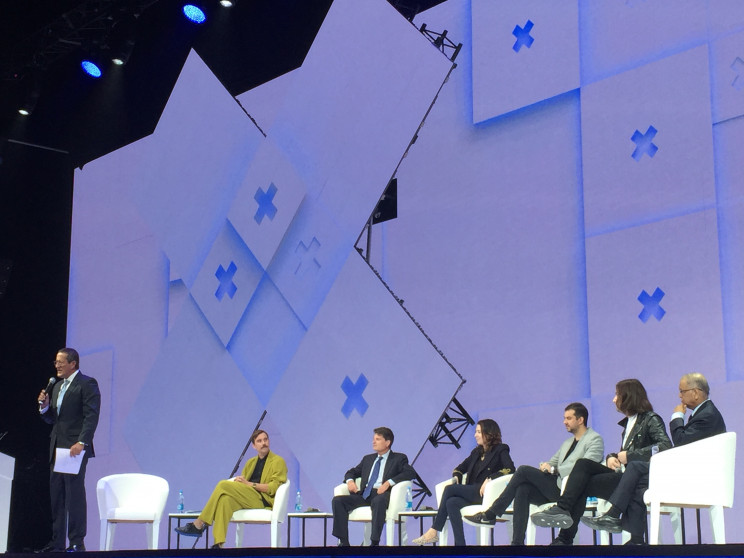

AI: What is your fundamental fear?

panel at WCIT discuss if AI in the future should have an ON/OFF

switch/Source: Susan Fourtané for Interesting

Engineering

Richard Quest[9], Business Anchor for

CNN, moderated the panel integrated by James Bridle[10], Multidisciplinary

Artist and Journalist; Martin Ford[11], Author and Futurist;

Daniel

Hulme[12], Director of Business

Analytics MSc, University College London and CEO of Satalia;

Christopher

Markou, Ph.D[13], Leverhulme Early

Career Fellow and Affiliated Lecturer at Jesus College University

of Cambridge; and Narayana Murthy[14], Founder and Chairman

Emeritus of Infosys.

Richard Quest asked the members of the panel what their

fundamental fears about AI are. The panel established that as AI,

Machine Learning, and robotics advance, more jobs will be lost.

“That can be any job, including some white-collar jobs,” said

Martin Ford.

And although more jobs, other different jobs will be created,

are those new jobs going to be enough for everybody?, he pondered.

And, what about the transition period? What are the big potential

challenges that will be occurring in the next decade, or

two?

“Companies must make profit and create jobs,”

said Narayana Murthy. “According to research

from Oxford University, Murthy said,” 40 percent of

jobs will be automated by 2025.

“Regulation is good when it’s not telling you what to do,” said

Christopher Markou. Discussing the limits of

these machines, he added that AI should not exist in places such as

classrooms. “Where we don’t want these things is what we should be

discussing,” he said.

AI machines are predicted to be the last invention of human

beings, and this could happen in our lifetime. “Adaptable machines

can be dangerous. If the machine, say autonomous weapons, have the

capacity to adapt to their environment and learn from it, then if

the machine is in a bad environment learning from humans whose

purpose in life is to damage other humans it means that is what the

machines will learn. And that can be unstoppable. Indeed.”

In the end, Richard Quest ended the

discussion by asking the panel if every machine should have an

ON/OFF switch. Answers varied. Based on AI safety research

conducted by the University of Cambridge, “the central authority

must remain human,” Christopher Markou

concluded.

What do you think, should every machine, including AI machines,

have an ON/OFF switch?

Related Articles:

References

- ^

23rd

(wcit2019.org) - ^

World Congress on Information

Technology (wcit2019.org) - ^

Artificial Intelligence

(interestingengineering.com) - ^

Narayana Murthy

(www.infosys.com) - ^

Infosys

(www.infosys.com) - ^

Artificial Intelligence

(interestingengineering.com) - ^

Machine

Learning (interestingengineering.com) - ^

human

decision-making process

(interestingengineering.com) - ^

Richard Quest

(edition.cnn.com) - ^

James

Bridle (jamesbridle.com) - ^

Martin

Ford (mfordfuture.com) - ^

Daniel Hulme

(www.linkedin.com) - ^

Christopher Markou, Ph.D

(www.law.cam.ac.uk) - ^

Narayana Murthy

(www.infosys.com)